Maps appropriate to context

The digital maps found on today’s mobile devices derive from cartographic techniques hundreds of years old. User context, however, has changed. The situations in which users find themselves accessing maps on a mobile device are very different from spreading a paper map on a table.

The HaptiMap project is an EU-funded effort to develop digital mapping enhanced with an integrated approach to haptics, audio and visual feedback and more appropriate to mobile user context.

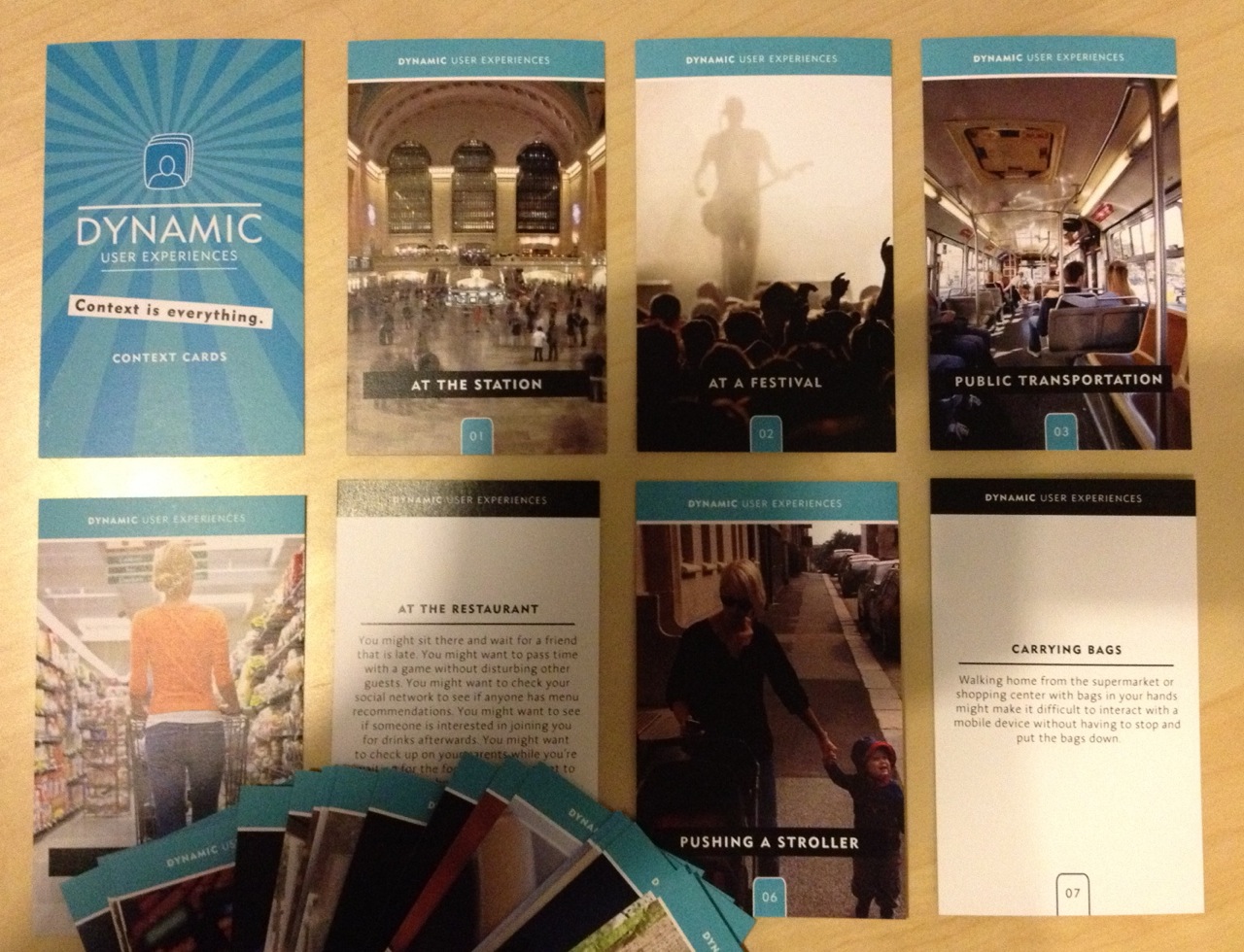

To focus the project team on the importance of context, HaptiMap developed a set of 30 annotated photo cards illustrating some of the different scenarios in which users might be accessing maps, ranging from horse riding to carrying shopping bags. Charlotte Magnusson, who was part of Lund University’s engagement with the project, highlighted the importance of these cards in their design process. By necessity most design work takes place in an office environment, where even the most empathetic of practitioners find it hard to envisage the real contexts in which their products will be used. The cards provide HaptiMap’s team with a constant reminder of the specific contextual conditions which may effect the user experience.

The result is a freely available toolkit of ready-to-use Android modules which add a variety of haptic, audio and visual effects to mapping applications. Magnusson showed me some examples, including a map where the user could run their finger over roads and place names while feeling tactile confirmation and hearing the descriptions read aloud. There are obvious benefits for partially sighted users, but also broader use cases for a variety of ‘eyes free’ scenarios when it isn’t convenient or safe to dedicate visual attention to the map.

The HaptiMap team have also experimented with a haptic vocabulary for communicating direction instructions through tactile feedback. There are separate sequences of vibrations, of varying length and frequency, used to indicate left, right, straight ahead and distance to target. Similar to the increasing frequency of beeps in car parking sensors, HaptiMap’s tactile vocabulary is remarkably effective at conveying navigation without ever needing to look at a visual display.

Magnusson, herself an experienced researcher of haptics, explained that the team had spent considerable time trying to understand which types of vibration feedback seemed most natural and were best at capturing user attention.

The potential applications for this are numerous: Magnusson described how the French government’s research agency – CEA – has been using similar techniques to develop devices better suited to the needs of field workers.

HaptiMap’s toolkit is available to download and a worthwhile resource which should encourage user experience practitioners to experiment beyond the confines of visual design. It is an important addition to the nascent, but growing, set of resources available to help designers afford equal importance to visual, tactile and audible elements within mobile user experience. MEX is an active proponent of this approach and continues to raise awareness and facilitate the creation of new ideas in this area under the auspices of Pathway #9.

+ There are no comments

Add yours