Whispering to the future – a tale of navigating with Google Now

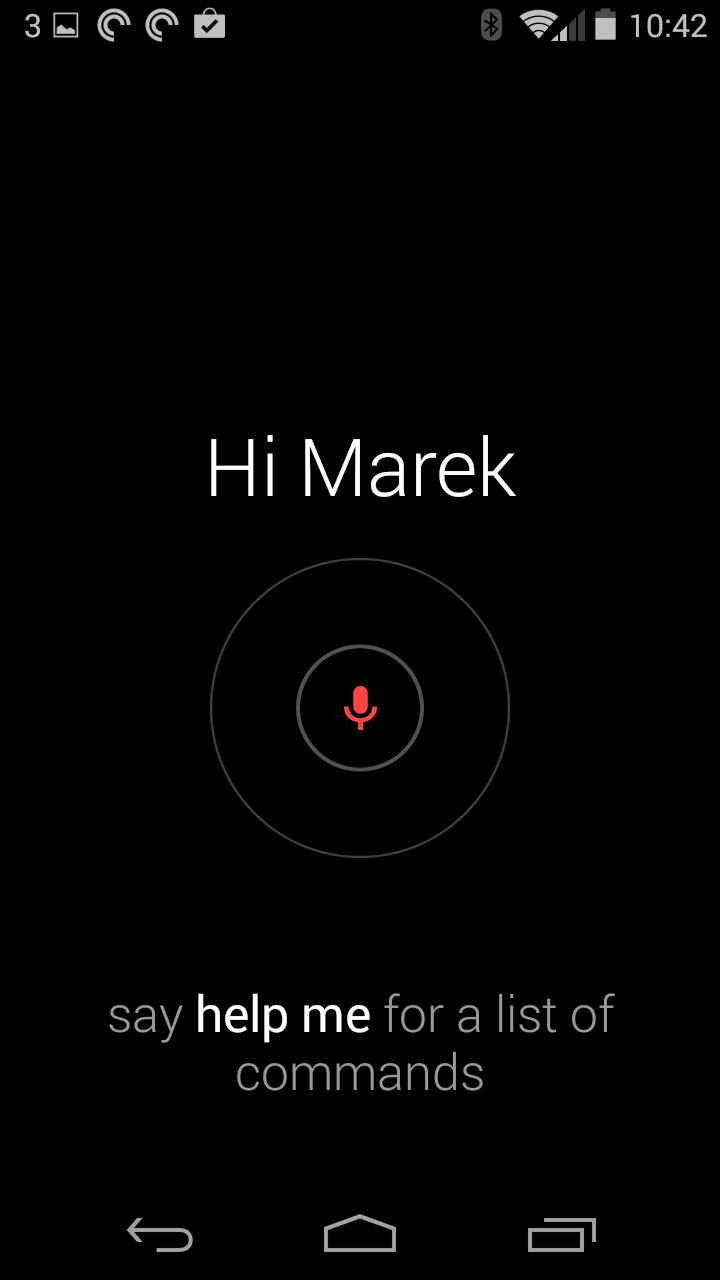

“Okay Google Now,” I whispered. Nothing. The device stayed silent.

I looked up the street to check whether I was still out of earshot of the lady and child I’d noticed earlier. “Okay Google Now,” I tried again, a little louder, but acutely aware how odd I’d look if they overheard me. I’d slowed my pace slightly to avoid catching up with them. At the back of my mind I wondered if someone was watching from a window of the nearby houses, bemused by my attempts to converse with the cloud.

Voice interfaces may hold the promise of eliminating visible barriers between us and the machines, like screens and buttons, but this attempt to converse with Google Now felt anything but natural. It may not have required me to physically touch the device, but it was certainly having physical, real world consequences: I was whispering, changing my pace and glancing about like a fugitive.

I was a victim of my own ambition perhaps. As I emerged from the train station, I had this notion I would put my headphones in, ask Google for walking directions and set off to my destination on the other side of town, with Google’s audio instructions playing over the top of a podcast.

It is a vision of the future I’ve been promised for some time – I can remember Nokia demonstrating this sort of thing with its in-built mapping applications about a decade ago. The reality of the user experience in 2014, however, highlighted the big questions which remain.

While in theory the Moto X I was using supported ‘touchless control’, I still needed to take out the device, stop, and interact with the screen to begin navigating. The technology components are already in place, but the user experience broke down quickly. I could initiate the voice interaction by saying ‘Okay Google Now’ (the Moto X is always listening for this command, courtesy of a dedicated chip) into the microphone embedded on my headphone wire, then ask for walking directions to my destination. However, there was no confirmation it had understood me. I stopped in the street, took out the phone and found it was offering me some route options, but had to interact with the screen to choose them. I was expecting a conversation with the device to get me heading in the right direction, but instead the voice interaction had essentially been a less effective way to move through the same dialogues I would have seen on-screen.

Similarly, there was no way to start my podcast playing through a voice command, as the application I use is not yet integrated with Google Now. I could ask Google Now to play me some music from its own Google Music application, but the podcasts I wanted were excluded.

Once the audio navigation was started, it was quite effective. I could walk through the streets, headphones in, phone in my pocket, with timely and accurate directions playing over the podcast. It was an experience I would want to use frequently, as I liked the way I could be fully immersed in exploring the town, without having to glance at my phone, whilst knowing I was heading in the right direction to my next appointment.

By the time I found myself catching up with the lady and child, I was sufficiently confident to try something else. Would Google be able to handle placing a call for me, so I could tell the person I was meeting at my destination when to expect me?

This was where the experience started to break down again. I realised I felt self-conscious issuing commands to my phone. At a distance, other pedestrians probably couldn’t even see I was wearing headphones, and would imagine I was talking to thin air. The ‘always listening’ Moto X may be capable of touchless control, but societal norms mean that most people still expect to see you interacting with an artefact. Talking aloud to yourself is still more likely to suggest ‘madman’ than ‘futurist’.

My furtive whisper, therefore, was not recognised, so had to be repeated several times – another sure sign of the unsound mind. I then asked it to place my call and was advised there were several numbers stored for that particular contact. One of them, I knew, was their overseas mobile – which I definitely didn’t want to call and incur international roaming rates. Google could tell me how it had labelled these numbers, and ask me to say which one I wanted to call, but the labels themselves didn’t mean much – ‘mobile, mobile, mobile and home’ were the options. I had to pause and take out the phone again to check which of the three mobile numbers I actually wanted.

The call was eventually made and I carried on walking, only to find the audio directions – which had played over my podcast – were no longer offered while I was talking to my contact. Instead, the phone vibrated and I had to take it out of my pocket again to follow the map visually while I carried on my conversation. The audio didn’t come back, even after I ended the call.

While the technical architecture may be in place to navigate the world handsfree by following Google Map’s reassuring prompts, the user experience still stumbles in 2014. I’d like to see future efforts focused on solving several issues:

- Re-imagine the voice interactions as a true conversation, not as a series of replacements for on-screen actions. Crucially, accept that even with with Google’s relatively impressive language accuracy, almost every voice interaction is going to incur some sort of error and the quality of the experience will be determined by how well these errors are handled. Users should never reach a dead end from which they cannot extricate themselves without taking out their phone.

- Empower users to respect local etiquette by customising voice commands. ‘Okay Google Now’ may be self-explanatory in Silicon Valley, but in almost everywhere else in the world, I’m sure users would prefer to be able to choose their own trigger phrase which made them sound like less of a fool. In Norwich, where I found myself for this experiment, perhaps I’d have been better off with ‘Ahaa!’?

- Combine buttons and other sensors with the voice commands to provide a greater range of interaction modes. For instance, tapping the phone screen in my pocket, something I could do without looking, might tell it I’d had enough of walking and now wanted to hop on public transport, or pressing the button on the headphone cable could give me an audio history of the nearest landmark.

Voice, the wider audible dimension and other non-visual interactions like haptics are going to be an essential skill for digital experience designers. We’ve known this for some time and made it a theme of MEX events and publishing for several years. It feels early, still, but there’s an opportunity here for the forward thinking to start equipping themselves with skills which will be in high demand in the years to come.

[…] ‘Whispering to the future – a tale of navigating with Google Now‘, Marek Pawlowski’s 2014 essay on voice UIs […]

[…] ‘Whispering to the future – a tale of navigating with Google Now‘, Marek Pawlowski’s 2014 essay on voice UIs […]